Elly Savatia was sitting in a cinema watching Avatar when the idea came to him. On screen, actors in sensor-studded suits were having their every movement translated in real time into digital characters. The thought that struck him had nothing to do with science fiction.

It was about sign language. If motion-capture technology could map a human body onto an avatar with that kind of fidelity, he reasoned, why couldn’t it do the same for a deaf interpreter?

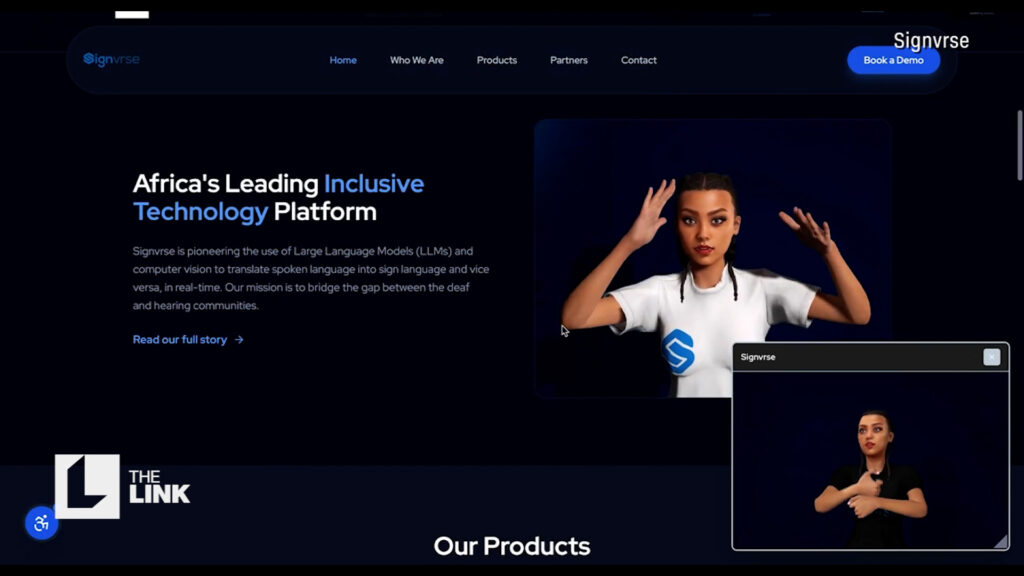

That question became Signvrse — a Kenyan tech company building what Savatia calls “the visual Google Translate for sign language.”

Inside their Nairobi studio, deaf interpreters sign into a rig of OptiTrack motion-capture cameras while the system captures not just broad gestures but the subtle facial expressions that give sign language so much of its meaning. That data trains an AI model capable of generating realistic Kenyan Sign Language animations from text, without requiring a human interpreter to be present every time. The goal is a comprehensive catalogue that any broadcaster, app developer, or public institution can draw from.

Most existing tools rely on stilted, cartoonish avatars that the deaf community largely rejects as unnatural and difficult to read. Signvrse is aiming for something far more faithful. Savatia sees the applications everywhere — TV, podcasts, healthcare, public safety announcements.

Kenya Airways is already in talks to integrate the technology into their in-flight experience, starting with real-time safety briefings.

Linda Okolo, Diversity and Disability Inclusion Champion at Kenya Airways, is direct about the motivation: “Inclusivity is not only a social imperative, it’s an economic one. There’s money on the table that we’ve been leaving by leaving persons with disabilities.”

It’s a refreshing reframe. Accessibility, too often treated as a costly obligation or a box to tick, recast instead as an audience that has simply never been properly served — and a market that has been waiting all along.